The Goal

To develop a robust Deep Reinforcement Learning (DRL) agent capable of navigating a holonomic mobile robot (Scout Mini) to randomized target poses in a 3D environment. The objective was to achieve high-precision positioning (error < 5cm) and orientation (error < 1°) while minimizing travel time.

The How

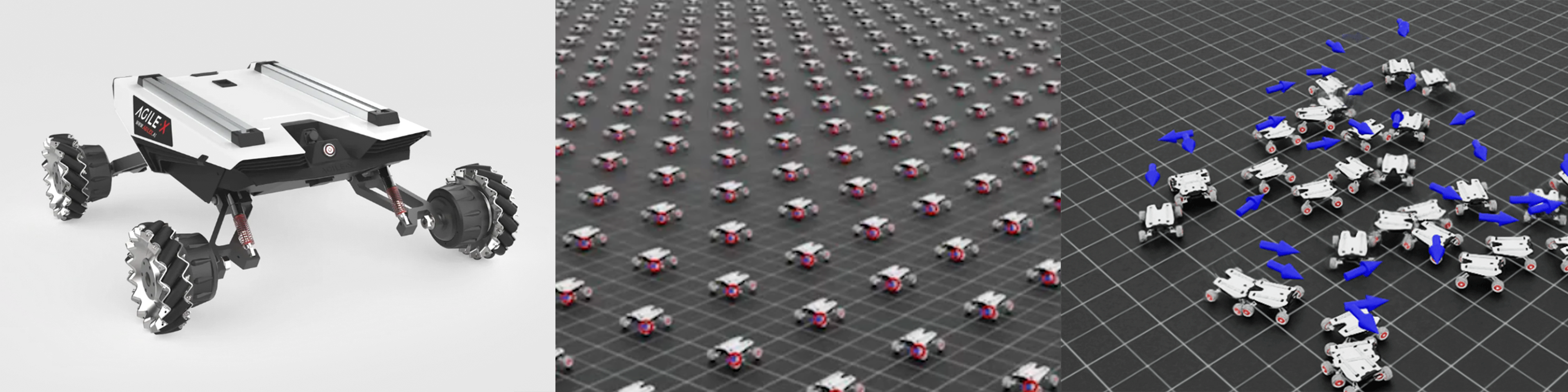

- Simulation Framework: Leveraged NVIDIA Isaac Lab and Isaac Sim to create a high-fidelity 3D training environment.

- Parallel Training: Utilized massive parallelism by training 4096 environments simultaneously on an NVIDIA RTX 3090, allowing for rapid data collection and iteration.

Training of the Scout Mini DRL agent in NVIDIA Isaac Sim

Movement of the trained Scout Mini agent in simulation

- State Space: Engineered an observation vector consisting of relative goal position, orientation difference, current velocity, and previous actions to ensure stable convergence.

The Results

- Rapid Convergence: The agent converged to a stable mean reward in approximately 400 epochs, with a total training time of just 14 minutes.

- High Precision: Achieved an average distance error of 2.68 cm (surpassing the 5 cm threshold) and a yaw error of 0.0004° (surpassing the 1° threshold).

- Efficiency: The robot successfully reaches random goals within a 4-meter radius in an average of 2.98 seconds.